About a year ago, I published a post covering how to monitor a Raspberry Pi using the open source Telegraf agent and VMware Aria Operations: https://enterpriseadmins.org/blog/virtualization/monitoring-a-raspberry-pi-with-telegraf-and-aria-operations

Recently, while rebuilding this configuration in a VCF 9.1 environment, I encountered a couple of changes that required updates to the original process:

- Updated InfluxData repository signing and package installation requirements

- Authentication workflow changes when using VCF 9.1 API tokens with the Telegraf Open Agent integration

The good news is that the remainder of the original workflow still functioned as expected after making these updates.

Although the original article focused on Raspberry Pi monitoring, these updates apply more broadly to Linux-based Telegraf Open Agent deployments, including x64 virtual machines and other supported systems.

Updated Telegraf Repository Configuration

When attempting to install or update Telegraf, apt update now produces the following error:

W: GPG error: https://repos.influxdata.com/ubuntu stable InRelease:

The following signatures couldn't be verified because the public key is not available:

NO_PUBKEY DA61C26A0585BD3B

E: The repository 'https://repos.influxdata.com/ubuntu stable InRelease' is not signed.This occurs because older repository signing methods commonly used in previous installation examples have been deprecated.

The updated installation process now uses a dedicated keyring file under /etc/apt/keyrings.

Cleanup / Removal Steps

Before adding the new repository, we may need to clean up the bad entries we have (assuming we started with the old post). That fix is rather straightforward, we just need to delete two files:

sudo rm /etc/apt/keyrings/influxdata-archive_compat.key

sudo rm /etc/apt/sources.list.d/influxdata.listWith the optional cleanup complete, we can proceed to the updated installation steps.

Updated Installation Steps

The following commands successfully configured the repository and installed Telegraf in my testing:

curl --silent --location -O https://repos.influxdata.com/influxdata-archive.key

gpg --show-keys --with-fingerprint --with-colons ./influxdata-archive.key 2>&1 \

| grep -q '^fpr:\+24C975CBA61A024EE1B631787C3D57159FC2F927:$' \

&& cat influxdata-archive.key \

| gpg --dearmor \

| sudo tee /etc/apt/keyrings/influxdata-archive.gpg > /dev/null

echo 'deb [signed-by=/etc/apt/keyrings/influxdata-archive.gpg] https://repos.influxdata.com/debian stable main' \

| sudo tee /etc/apt/sources.list.d/influxdata.listAfter configuring the repository:

sudo apt-get update

sudo apt-get install telegrafWorked as expected.

Why This Changed

Modern Debian and Ubuntu-based distributions are moving away from the legacy apt-key approach for repository trust management. Instead, repository signing keys are now commonly stored individually under: /etc/apt/keyrings/. This provides better isolation and improved repository handling.

Using VCF 9.1 API Tokens with the Telegraf Open Agent

With VCF 9.1, I also wanted to test using an API key-based authentication workflow instead of relying on a previously obtained Aria Operations token.

This process works as follows

- Generate an API token from VMware Identity Broker (VIDB)

- POST the API token to VIDB

- Receive a Bearer token

- Present the Bearer token to VCF Operations

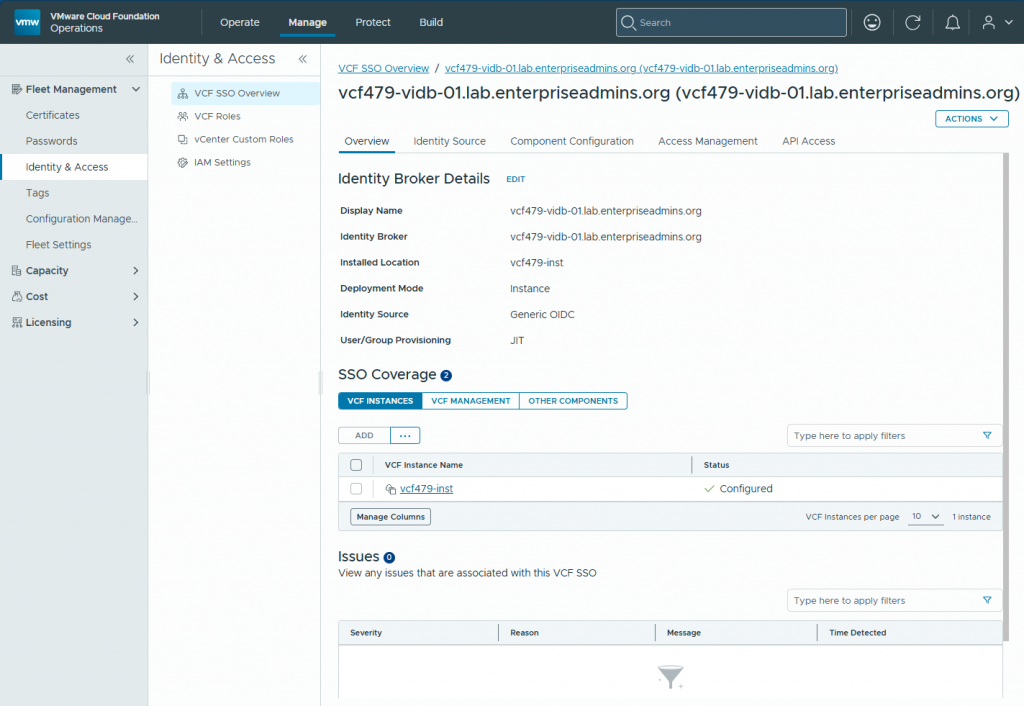

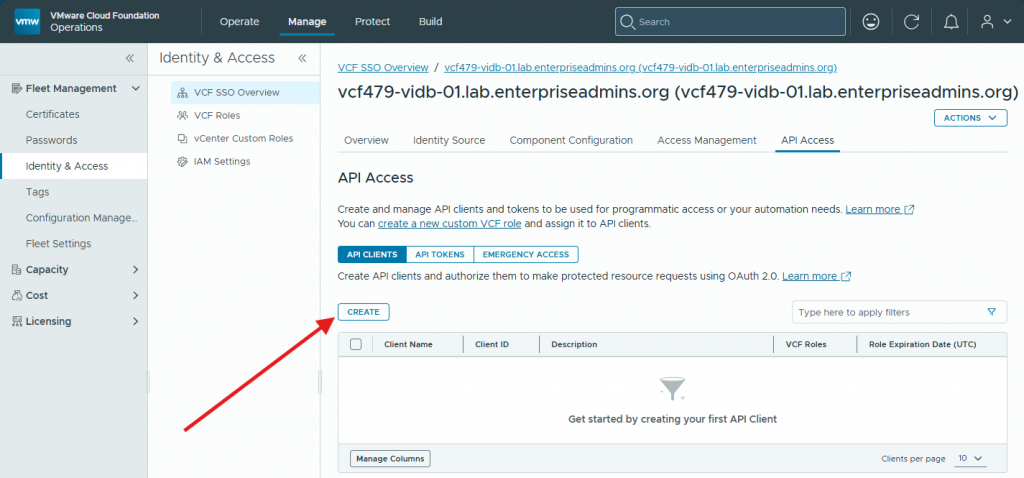

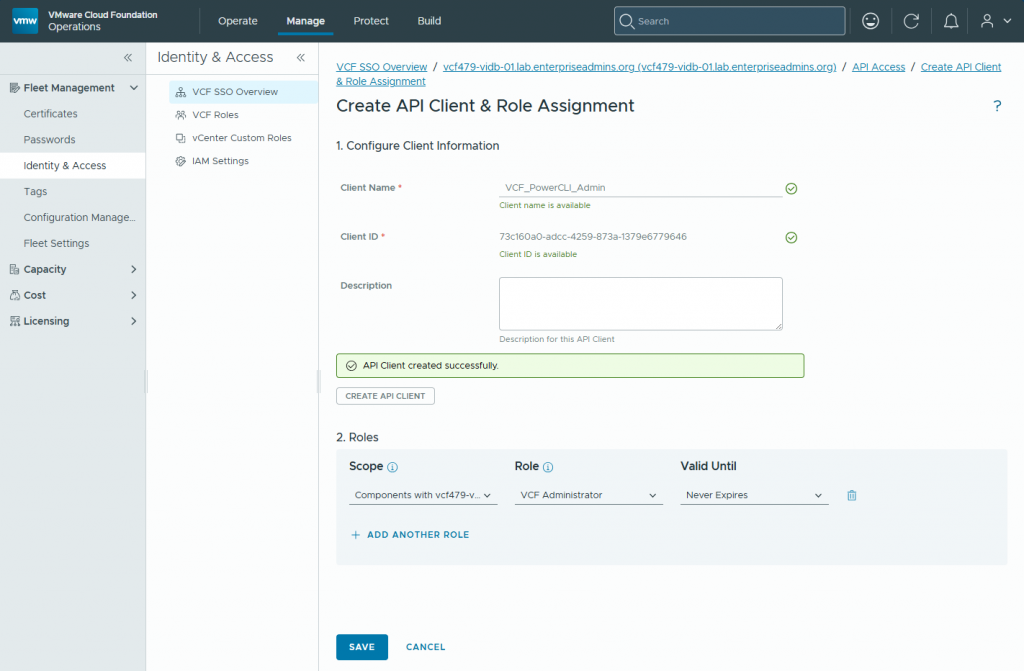

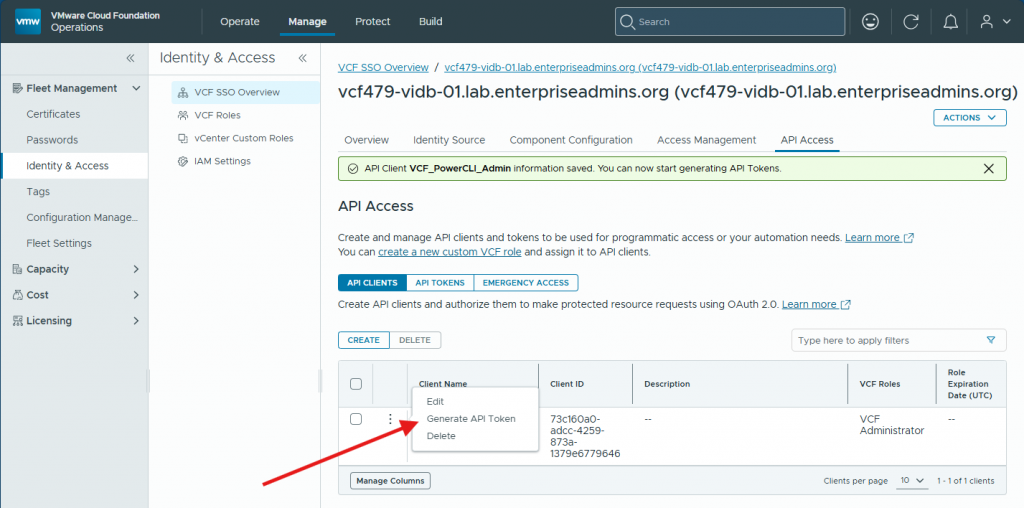

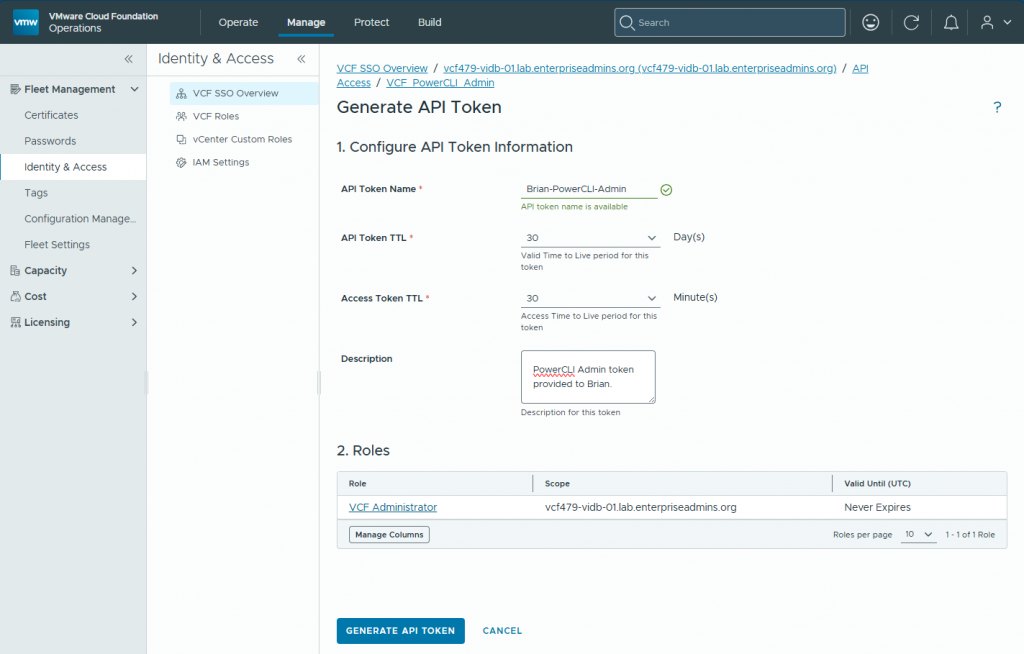

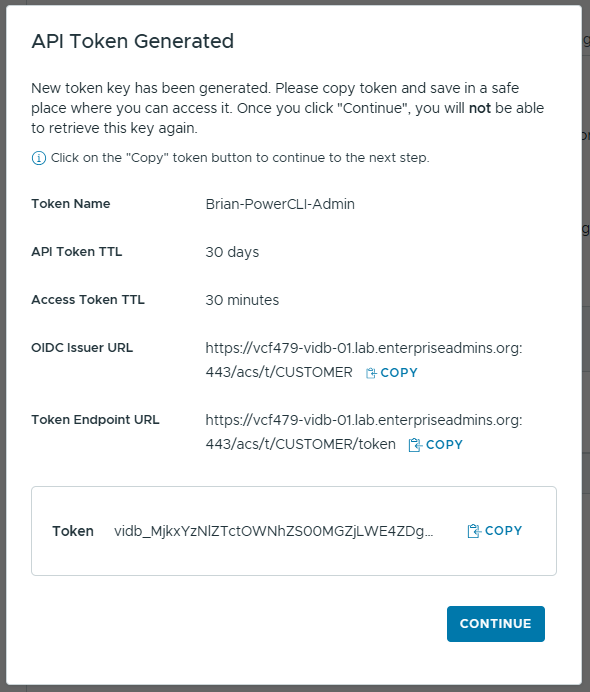

We can generate the API token in Manage > Identity & Access > VCF SSO > select identity broker instance > API Access tab, or by created a personal access token under our profile > Generate API token > Generate.

Once we have an API Token we need to present it to VIDB. We can do this with the following POST command:

vidbExtraLongToken=vidb_ZmVlMzM5ZGYtYWZkYS00OTkzLTkxMW<redatcted>

curl --request POST \

--url https://vcf479-vidb-01.lab.enterpriseadmins.org/acs/t/CUSTOMER/token \

--header 'content-type: application/x-www-form-urlencoded' \

--data grant_type=urn:custom:vcf:params:oauth:grant-type:api-token \

--data "api_token=$vidbExtraLongToken" \

--insecureDuring testing, I discovered that the token parameter handling within telegraf-utils.sh expected a traditional Aria Operations token format directly.

In the script, I could see (around like 378) an entry that showed:

377- #set Authorization header for on-prem

378- AUTHORIZATION_HEADER="Authorization: OpsToken $VROPS_TOKEN"(Line numbers added for reference)

Proof of Concept Modification

As a proof of concept, I modified the authorization header handling to expect and present a standard Bearer token instead.

Example modification:

AUTHORIZATION_HEADER="Authorization: Bearer $VROPS_TOKEN"Disclaimer: This modification should be considered a proof of concept only. Directly modifying bundled or vendor-provided scripts is generally not recommended. Use at your own risk.

After making this change, the remainder of the workflow from the original article functioned as expected in my VCF 9.1 environment.

Installing Telegraf

This is the command line I used to install telegraf:

sudo ./telegraf-utils.sh opensource -c 192.168.10.21 -t "<crazy_long_bearer_token_from_prior_curl_command>" -v 192.168.10.21 -d /etc/telegraf/telegraf.d -e /usr/bin/telegraf -k 1Where 192.168.10.21 was the IP address of my Operations Collector / Cloud Proxy appliance. As before, I needed to change permissions of files in the /etc/telegraf/telegraf.d directory to be owned by telegraf:telegraf and restart the service with systemctl restart telegraf.

Final Thoughts

Outside of the repository signing changes and API token handling updates, the remainder of the original integration process still worked well in my testing.

If you previously implemented the Telegraf Open Agent integration and encounter:

- repository signing errors

NO_PUBKEY DA61C26A0585BD3B- or authentication issues with VCF 9.1 API tokens

the updates above should help with the deployment for current environments.